How the AI Industry Speaks the Language of Public Interest

“The language of public benefit ultimately functions as risk socialisation in service of private return”

Written by Dhruva Nagesh, BSc Politics, Philosophy, and Economics

In 2015, OpenAI incorporated as a nonprofit ‘for the benefit of humanity’. A decade later, it is valued at $840 billion, has lost more than $9 billion in a single year, and has never turned a profit. Between those two facts lies the mechanism: persuading investors, governments, and the public that the returns are coming and the accounting can wait.

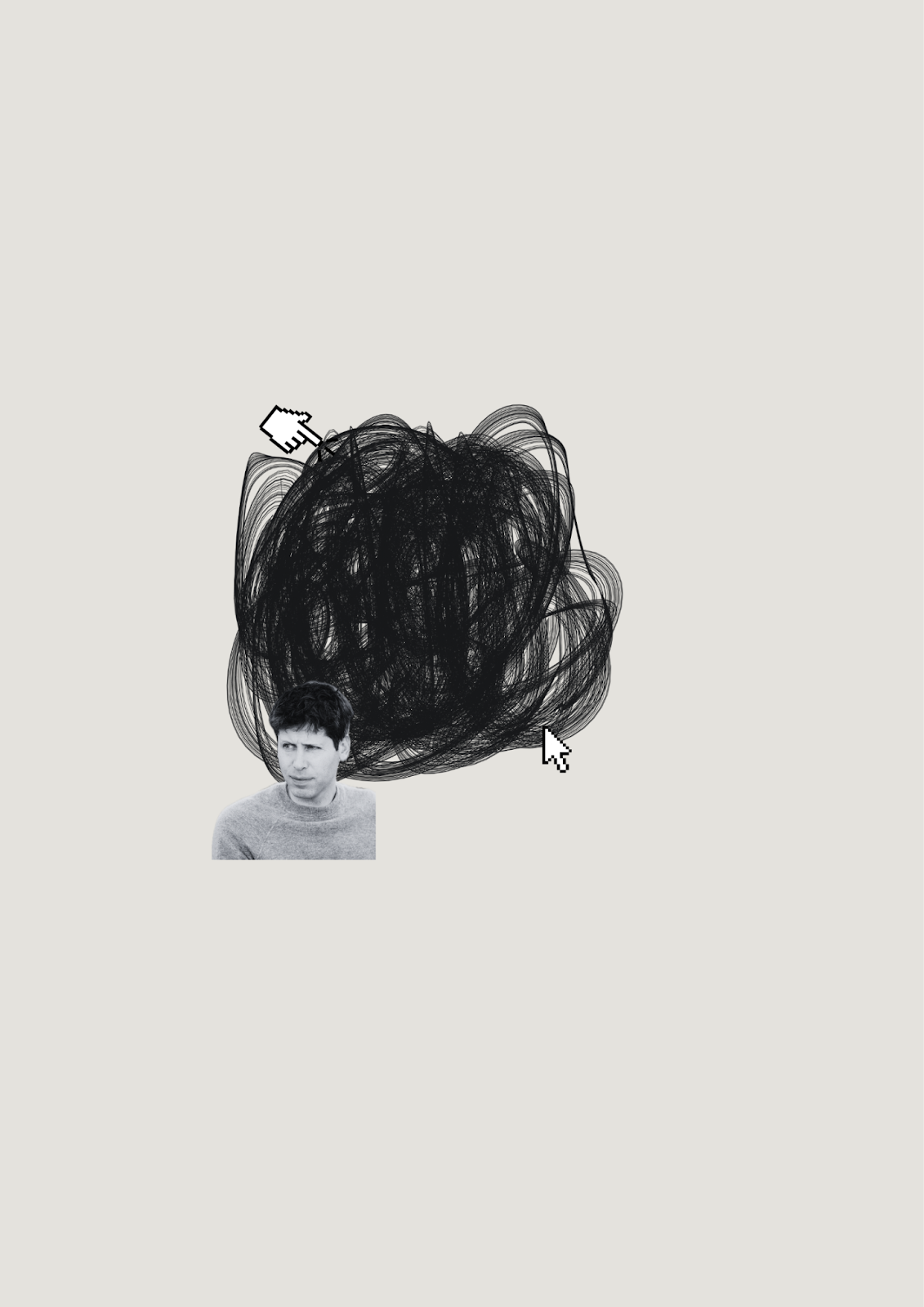

Sam Altman (CEO) speaks the language of the dispossessed: curing cancer, ending poverty and abundance for everyone. ChatGPT launched for free. The rhetoric of democratisation has always been the front door. In 2019, OpenAI converted to a ‘capped profit’ model, preserving the language of mission while directing returns to investors.

Altman donated $1 million to Trump’s inaugural fund. OpenAI’s President, Greg Brockman, reportedly donated $25 million to his new ballroom through a super PAC. The US government has since committed $500 billion to AI infrastructure through the Stargate initiative, placing OpenAI at its centre while dismantling federal oversight.

The UK’s AI Action Plan similarly positions the industry as the engine of national economic renewal. The language of safety and democracy that justified its nonprofit origins now recasts a speculative wager as national security. Framed against a Chinese competitor in DeepSeek, dissent is dismissed as a threat to development.

Inside the companies that have adopted it, the story is different. AI use at work has doubled since 2023; fully AI-led processes nearly doubled last year, yet a recent MIT Media Lab study found 95% of organisations see no measurable return on their investment. A BetterUp/Stanford study named what is happening ‘workslop'. AI-generated content that transfers cognitive labour downstream rather than completing it, each incident costing nearly two hours and $186 per worker a month. Mandated adoption is degrading the organisations it was supposed to liberate, as governments justify AI strategies on productivity gains the evidence cannot support.

Journalist Karen Hao, in Empire of AI, documents how the industry replicates the logic of empire: intellectual property taken without compensation, data annotators in Kenya and Venezuela paid dollars a day for work that makes the models run, and infrastructure imposed where communities cannot refuse. Data centres are extending coal plants past retirement, pumping pollutants into working-class areas. A Bloomberg investigation found two-thirds are in water-scarce regions; one proposed Google facility could consume more water than the local population. Profits flow to investors. Costs flow to those least able to bear them.

Who this company was built for is visible in the distribution of risk. Investors are insulated by valuation and executives by equity. When oversight interfered with commercial expansion, it was oversight that was removed. The costs are displaced onto workers, communities, and taxpayers and as capital expenditure swells and stock gains concentrate in a handful of firms, the exposure becomes systemic. In any market correction, the losses will not be evenly shared. The language of public benefit ultimately functions as risk socialisation in service of private return.